aka a maybe possibly better way to script a stack

As I demonstrated in a recent post, you can script just about anything in AWS from the console using the Boto package for Python. I knew that in order to stand up EC2 instances, I was going to need a few things: a VPC, some subnets, and security groups. Turns out there are a few other things I needed, like an internet gateway and a routing table, but that comes later. As I wrote those Boto scripts, I found myself going out of my way to do a few things:

- provide helper functions to resolve “Name” tags on objects to their corresponding ID (or to just fetch an object by its Name tag, depending on which I needed)

- check for the existence of an object before creating it

- separate details about the infrastructure being created into config files

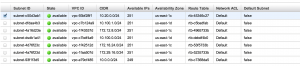

The first one was critical, because it doesn’t take long for your AWS console to become a nice bowl of GUID soup. The first time you see a table like this, you know you’ve got a problem:

I’ve obscured IDs to protect… something. Resources that probably won’t exist tomorrow. But believe me, changing those IDs took a long time, which is half my point: let’s get some name tags up in here.

The challenge is that you have to be vigilant about creating tags on objects with the key “Name,” and then you have to go out of your way to code support for that, because tagging is entirely optional. You want friendly names? Implement it yourself. This is your little cross to bear.

The second task was aimed at making the scripts idempotent. It’s very useful when building anything like this to be able to run it over and over without breaking something.

The third task was an attempt to decouple the infrastructure from boto (and optimistically, any particular IaaS) and plan for a “configuration as code” future. How nice would it be to commit a change to the JSON for a routing table and have that trigger an update in AWS?

Enter Cloudformation

So all this was working rather well, but before I delved too deep, I knew I needed to check out AWS Cloudformation. Bob Winding had described how it can stand up a full stack of resources; in fact, it does many of the things I was already trying to do:

- describe your infrastructure as JSON

- reference resources within the file by a friendly name

- stand up a whole stack with one command

In addition, it adds a lot of metadata tags to each object that allow it to easily tear down the whole stack after it’s created. As an added bonus, it provides a link to the AWS price calculator before you execute, giving you an indication of how much this stack is going to cost you on a month-to-month basis.

Nice! This is exactly what we want, right? The resource schema already exists and is well documented. Most of those things correspond to real-life hardware or configuration concepts! I could give this to any sysadmin or network engineer, regardless of AWS experience, and they should be able to read it right away.

The Catch

I like where this is going, and indeed, it’s a lovely solution. I have a few concerns — most of which I believe can be handled with good policy and processes. Still, they present some challenges, so let’s look at them individually:

1. One stack, one file.

You define a stack in a single JSON file. The console / command you use to execute it only runs one at a time, which you must name. The stack I was trying to run starts with a fundamental resource, the VPC. I can’t just drop and recreate that willy-nilly. It’s clear that there must exist a line between “permanent” infrastructure like VPCs and subnets and the more ephemeral resources at the EC2 layer. I need to split these files, and not touch the VPC once it’s up. However…

2. Reference-by-ID only works within a single file

As soon as you start splitting things up, you lose the ability to reference resources by their friendly names. You’re back to referencing relationships by ID. This is not just annoying: it’s incredibly brittle. AWS resource IDs are generated fresh when the resource is created, so the only way those references stay meaningful is if the resource you depend on is created once and only once. That’s not always what we want, and it’s extra problematic because…

3. Cloudformation is not idempotent (but maybe that’s good)

Run that stack twice, and you’ll get two of everything (usually). Now, this is actually a feature of CloudFormation. Define a stack, and then you can create multiple instances of it. If you want to update an existing stack, you can declare that explicitly. However, some resources have a default “update” behavior of “drop and recreate.” So if it’s a resource with dependencies, things get tricky. The bottom line here is that we have to be smart about what sorts of resources get bundled into a “stack,” so we can use this behavior as intended — to replicate stacks. And finally…

4. It’s not particularly fast

It just seems a bit slow. We’re talking like 2 minutes to go from VPC to reachable EC2 instance, but still. My boto scripts are a good deal faster.

There is a lot to like about CloudFormation. You can accomplish quite a bit without much trouble, and the resulting configuration file is easy to read and understand. Nothing here is a showstopper, as long as we understand the implications of our tool and process choices. We can always return to boto or AWS CLI if we need more control over the build process.

The Challenge of Governance

I don’t believe any of the difficulties outlined above are unique to CloudFormation. Keeping track of created resources and their various dependencies, deciding on the relative permanence of stack layers, and implementing a solution where parts of the infrastructure can truly be run as “configuration as code” are all issues that we must tackle as we get serious about DevOps practices. These are just the sorts of questions I have in mind for the first meeting of the DevOps / IaaS Governance Team this week.

We should feel encouraged that we’re not pioneers here! Let’s reach out to friends and colleagues in higher ed and in industry to see how these issues have played out before, and what solutions we may be able to adopt. When we know more, we’ll be that much more confident to proceed, and I can write the next blog post on what we have learned.

OIT staff can view my CloudFormation templates here.